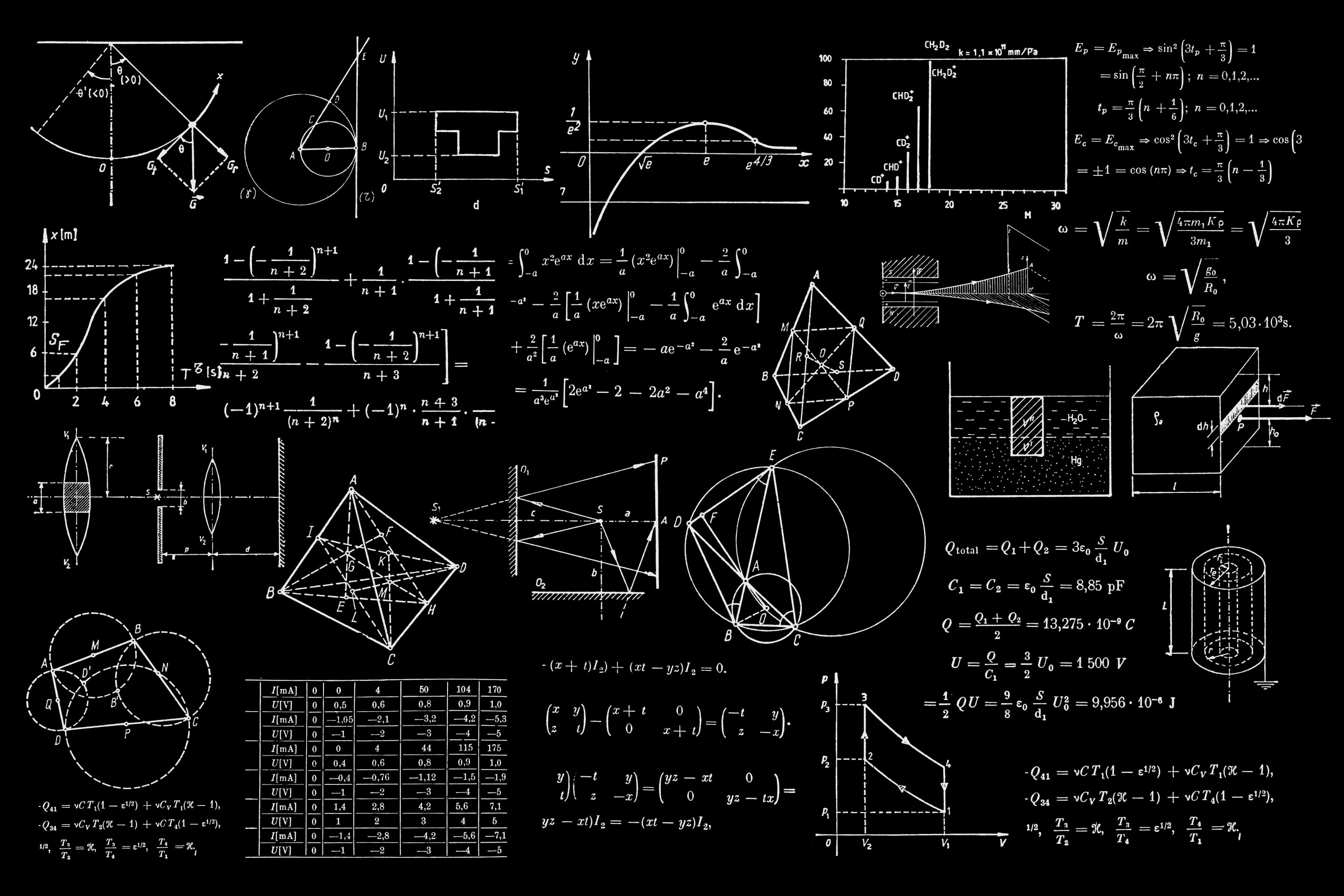

Mathematical models that predict policy-driving scenarios – such as how a new pandemic might spread or the future amount of irrigation water needed worldwide – may be too complex and delivering ‘wrong’ answers, a new study reveals.

Experts are using increasingly detailed models to better predict phenomena or gain more accurate insights in a range of key areas, such as environmental/climate sciences, hydrology and epidemiology.

But the pursuit of complex models as tools to produce more accurate projections and predictions may not deliver because more complicated models tend to produce more uncertain estimates.

Researchers from the Universities of Birmingham, Princeton, Reading, Barcelona and Bergen published their findings today in Science Advances. They reveal that expanding models without checking how extra detail adds uncertainty limits the models’ usefulness as tools to inform policy decisions in the real world.

Arnald Puy, Associate Professor in Social and Environmental Uncertainties at the University of Birmingham, commented: “As science keeps on unfolding secrets, models keep getting bigger – integrating new discoveries to better reflect the world around us. We assume that more detailed models produce better predictions because they better match reality.

“And yet pursuing ever-complex models may not deliver the results we seek, because adding new parameters brings new uncertainties into the model. These new uncertainties pile on top of the uncertainties already there at every model upgrade stage, making the model’s output fuzzier at every step of the way.”

This tendency to produce more inaccurate results affects any model without training or validation data used to check its output’s accuracy – affecting all global models such as those focused on climate-change, hydrology, food-production, and epidemiology, as well as models projecting estimates into the future, regardless of the scientific field.

Researchers recommend that the drive to produce increasingly detailed mathematical models as a means to get sharper estimates should be reassessed.

“We suggest that modelers should calculate the model’s effective dimensions (the number of influential parameters and their highest-order interaction) before making the model more complex. This allows to check how the addition of model complexity affects the uncertainty in the output. Such information is especially valuable for models aiming to play a role in policy making,” added Dr. Puy. “Both modelers and policy makers benefit from understanding any uncertainty generated when a model is upgraded with novel mechanisms.

“Modelers tend not to submit their models to uncertainty and sensitivity analysis but keep on adding detail. Not many scholars are interested running such an analysis on their model if it risks showing that the emperor runs naked and its alleged sharp estimates are just a mirage.”

Excess complexity prevents scholars and public alike to ponder the appropriateness of the models’ assumptions, often highly questionable. Puy and his team note, for example, that global hydrological models assume that irrigation optimises crop production and water use – a premise at odds with practices of traditional irrigators.

Leave a Reply